short

- Mistral Medium 3.5 is a 128 billion parameter-dense model at $1.50 input/$7.50 output per million symbols, which is much higher than similar Chinese alternatives.

- Chinese open source models – Qwen, GLM, and MiMo-V2 – dominate the top of the leaderboard, leaving Mistral as the only Western stronghold.

- Mistral is positioning the release as a building block towards a future major model.

AI company Mistral dropped Mistral Medium 3.5 on April 29. The Paris-based lab announced a dense model with 128 billion parameters and a host of powerful features — and went straight into a wall of “migrated” online interactions.

The release came in three parts. First, the model itself. Second, remote coding agents via Mistral Vibe CLI cloud coding sessions that can push pull requests to GitHub and run them in parallel without sitting in a terminal. Third, put the work in The catMistral’s ChatGPT-style UI, which now handles multi-step standalone tasks like email sorting, research synthesis, and cross-tool workflows.

Big ambitions, but messy normative reality.

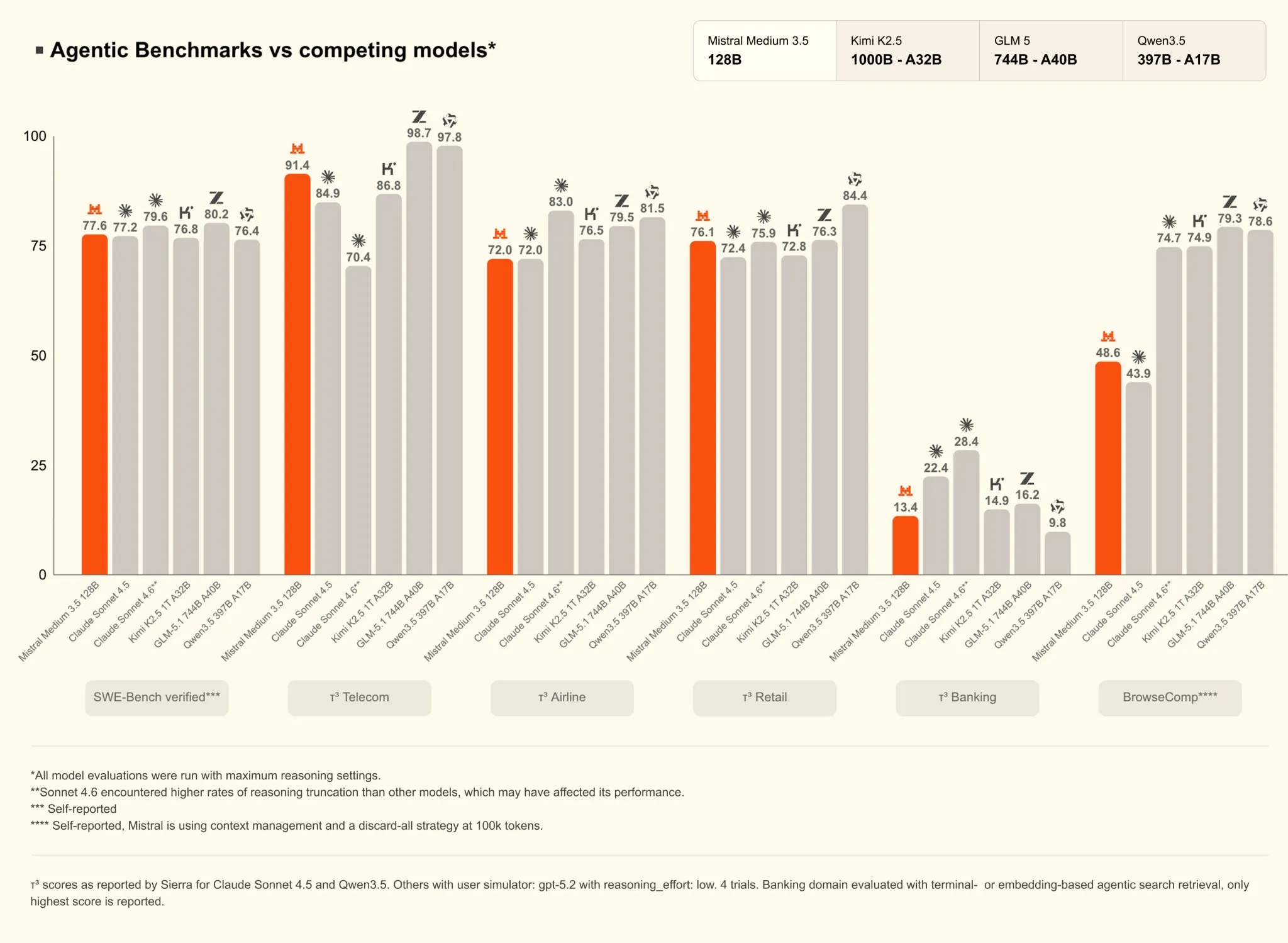

Average 3.5 Score 77.6% in the SWE-Bench Verified test, a coding benchmark that tests whether a model can fix real GitHub issues by generating working patches. It also reached 91.4% on τ³-Telecom, which measures the use of effective tools in specialized environments. Mistral has also combined three previously separate models (Medium 3.1, Magistral, and Devstral 2) into a single set of weights with configurable reasoning effort for each request.

The unified model replacing all three is a real engineering win. The problem is what the cost will be and who will face it.

Mistral charges $1.50 per million input codes and $7.50 per million output codes. Alibaba’s Qwen 3.6, which has 27 billion parameters – less than a quarter as many as Medium’s 3.5 – scores 72.4% on the same SWE-Bench Verified benchmark and comes under Apache 2.0, which means you can download and run it for free.

Did you know?

The parameters are what determine the ability of artificial intelligence to learn, think and store information. The greater the number of parameters, the greater the breadth of model knowledge.

Scroll through the open source leaderboards and you’ll find the picture stark. The top places belong to Alibaba’s Qwen, China’s Zhipu AI’s GLM, and Xiaomi’s MiMo-V2, all of which are cheaper, more powerful and competitive than the new version of Mistral. The average 3.5 level isn’t even ranked on the major independent leaderboards yet, and third-party ratings are still pending.

But the only good thing, some argue, is that Mistral, at this point, is the only non-Chinese model with any serious presence in the open source conversation.

The Internet reacts

Pedro Domingos, a professor of machine learning at the University of Washington, wasn’t so kind:

“Regular AI companies brag about how much better their model is on the benchmarks. Only Mistral brags about how bad their model is.”

He followed this up with a more pointed question: “I don’t know what’s worse, for Europe to not be in the AI race, or to be represented by a laughingstock like Mistral.”

Youssef Al-Toukhi, founder of YoYo Studios. I did the math: Qwen 3.6, with 27 billion parameters, is 4.7 times smaller than Medium 3.5 and achieves similar coding results. Pricing the Medium 3.5’s output puts it alongside closed models that score significantly higher in every major criterion.

“If it were not for their political skill, they would be bankrupt by now,” he said.

And not everyone was completely dismissive. AI developer Michel Langemeier captured the discrepancy:

“I’m really glad that there is still a non-US and non-Chinese lab trying to build leading MBA programs, but we have to up the game in Europe. Their new flagship model is not fundamentally ‘the best’ by any standard, and yet it costs several times more than most competitors.”

Some developers have argued that Open Weights is a durability game rather than a leaderboard game. A model that anyone can download, set up, and self-host doesn’t need to win ratings today to remain relevant. Others pointed to real Mistral deployments across Europe as evidence that the trench was not purely technical.

Geopolitical safety net

This is where the actual Mistral show lives.

European companies subject to GDPR, banks that handle sensitive customer data, and governments that will not route their AI workloads through Chinese infrastructure have limited options. like Decryption I mentioned Last December, HSBC signed a multi-year deal with Mistral specifically for self-hosting models on its infrastructure. The appeal of the $14 billion European Union-based open-weight laboratory doesn’t show up in benchmark tables, but it does show up in purchasing decisions.

Not the best in programming, and not the cheapest. But it’s not American, it’s not Chinese, it’s auditable, it’s self-hosted, and it’s legally safe for European institutions.

Daily debriefing Newsletter

Start each day with the latest news, plus original features, podcasts, videos and more.