short

- Apple CEO Tim Cook has warned that the Mac mini and Mac Studio devices may remain in short supply “for months” after artificial intelligence-driven demand far exceeded the company’s expectations.

- OpenClaw, the open source AI agent platform now powered by OpenAI, has transitioned Apple’s unified memory architecture to virtual machines to run large, local AI models.

- Apple’s M4 Ultra supports up to 192GB of unified memory, allowing developers to run models that can’t fit on any single consumer Nvidia GPU, which maxes out at 32GB of VRAM.

Apple’s Mac mini has always been the quiet, forgettable desktop in the back of the Apple Store. Practical, cheap by Apple’s standards, and largely ignored by the AI crowd. Then OpenClaw happened.

On Thursday, Tim Cook told analysts that the Mac mini and Mac Studio are sold out, and could stay that way for months. “Both are great platforms for AI and agent tools,” he said. Apple’s Q2 2026 earnings call“Customers are realizing this faster than we expected.”

Translation: Apple miscalculated how much developers wanted these devices, especially in times when scarcity is messing with markets.

Mac revenue reached $8.4 billion For the quarter, up 6% year over year. Not quite an explosion, but supply constraints, not demand, are the limiting factor. Not only are Mac mini and Mac Studio configurations with high RAM delayed; Some have been pulled from the Apple Store entirely.

The basic $599 Mac mini is Sold in the United States There is no delivery or store pickup service available. Upgraded configurations with 64GB of RAM show standby times of 16 to 18 weeks. Mac Studio models with 512GB of unified memory have disappeared from the store completely. Scalpers on eBay quickly became successful, listing basic models at nearly double retail sales.

The catalyst for all this? OpenClaw and the memory-hungry Agentic AI boom.

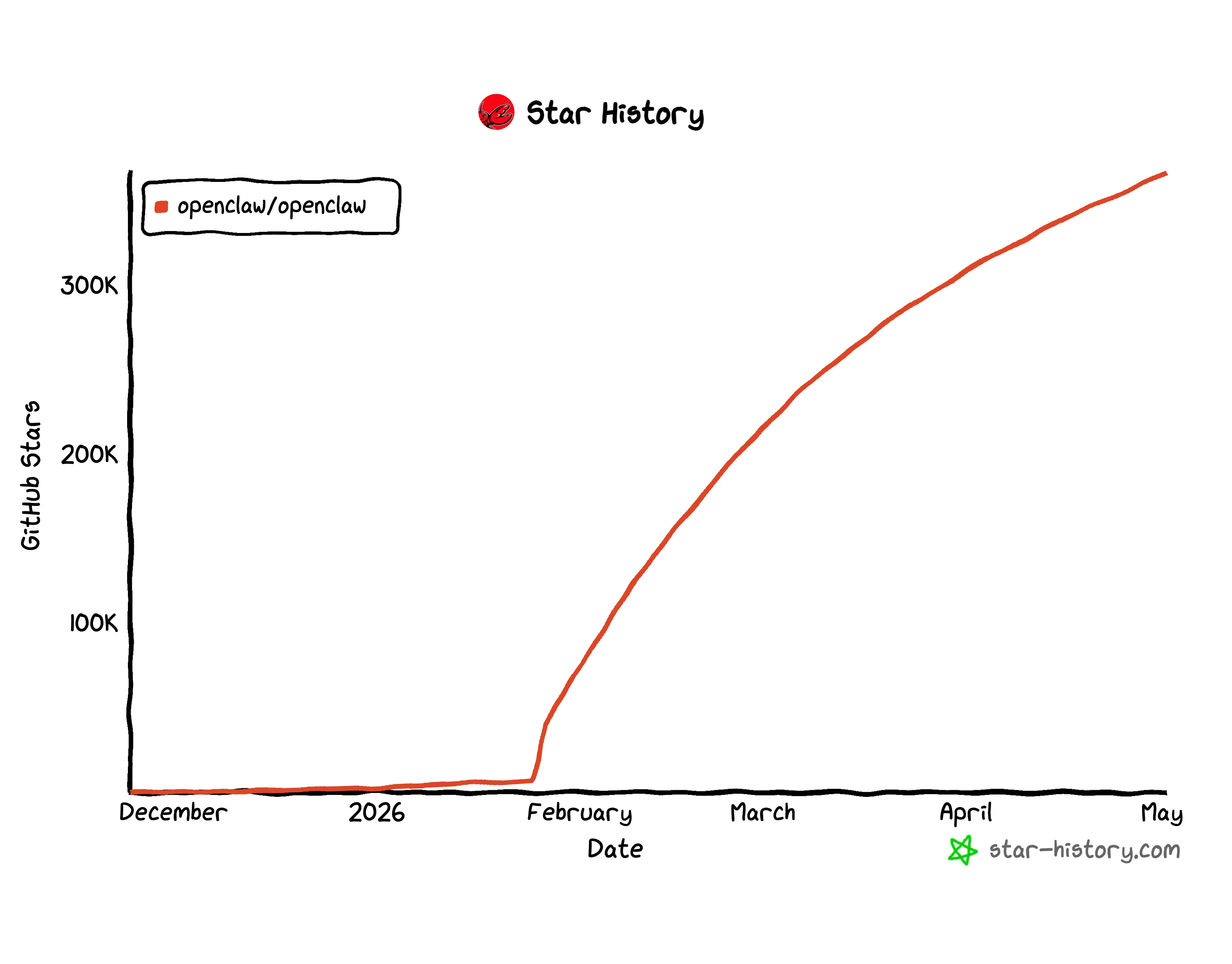

Open source AI agent framework —Built by Peter Steinberger And now Powered by OpenAI After a bidding war with Meta – it spread to over 323,000 GitHub stars and became the fastest way for individuals and small teams to run persistent AI agents locally. The unofficial reference device for running it became, almost immediately, the Mac mini.

It wasn’t the result of a marketing push though.

The thing most people covering the Mac shortage miss is that Apple has been irrelevant to serious AI workloads for years. Before the miracle of AI agents became mainstream, people complained that running LLMs and Stable Diffusion and any other type of home AI software was too slow and almost unusable. The M2 Mac’s performance was similar to that of a GPU as of 2019. Apple’s refusal to adopt CUDA or use Nvidia, and the push for MLX technology, made it as irrelevant for AI as it was for gaming.

Nvidia ruled because CUDA — its GPU programming framework — was the backbone for model training and inference. The entire AI stack is built around it. Apple didn’t have anything comparable. Nobody wants a Mac for local inference.

But CUDA has a dirty secret: VRAM limits.

Even Nvidia’s best consumer GPU, the RTX 5090, has up to 32GB of VRAM. This is a hard ceiling. A model larger than 32GB can’t run at full speed on that card, it spills over into slower system RAM, creeps across the PCIe bus, and performance tanks. To run a serious model with 70 billion parameters on Nvidia hardware, you need multiple GPUs, a server rack, significant power consumption, and thousands of dollars.

Apple Unified memory architecture (UMA) solves this problem in a way that CUDA cannot. In Apple Silicon, the CPU, GPU, and Neural Engine all share the same physical pool of random access memory (RAM). There is no separate VRAM. There is no PCIe bus to pass through. A 64GB Mac mini can load a $70 billion model of parameters that a $1,800 RTX 5090 simply refuses to touch.

The M4 Ultra chip—the chip that powers high-end Mac Studio configurations—supports up to 192GB of unified memory. This is enough to run 100 billion parameter models locally on a single machine. There is no server. There is no monthly cloud bill.

OpenClaw has made this trade-off clear. Because it ran agents locally—connecting to your files, apps, and messages—users needed hardware that could handle the load of thinking without renting computing from the cloud. The Mac mini with 32GB of unified memory comfortably runs models with 30B parameters. The 128GB Mac Studio handles models that most developers couldn’t touch without an enterprise GPU kit a year ago.

A slow Mac capable of running a powerful AI model is much better than a powerful Nvidia card that is unable to even load that model at all.

The result: Developers started buying Mac minis the same way they used to buy Raspberry Pis: multiple units at the same time, treated as infrastructure rather than personal computers. Apple’s supply chain was never designed for this pattern.

There is also a broader lack of memory which exacerbates the problem. IDC expected Global personal computer shipments are expected to decline 11.3% in 2026, partly due to a shortage of memory chips fueled by demand for AI servers. Apple is now competing for the same amount of RAM as data center builders.

Cook said it could take “several months” to rebalance supply and demand for the Mac mini and Studio. An update to the M5 chip is expected later in 2026, which could ease the pressure, but current buyers are stuck waiting or paying speculative prices.

The Mac mini is more compelling in 2026 than at any time in its twenty-year history, and all it needed was some help from an open source project that Apple had absolutely nothing to do with to make it happen.

Daily debriefing Newsletter

Start each day with the latest news, plus original features, podcasts, videos and more.